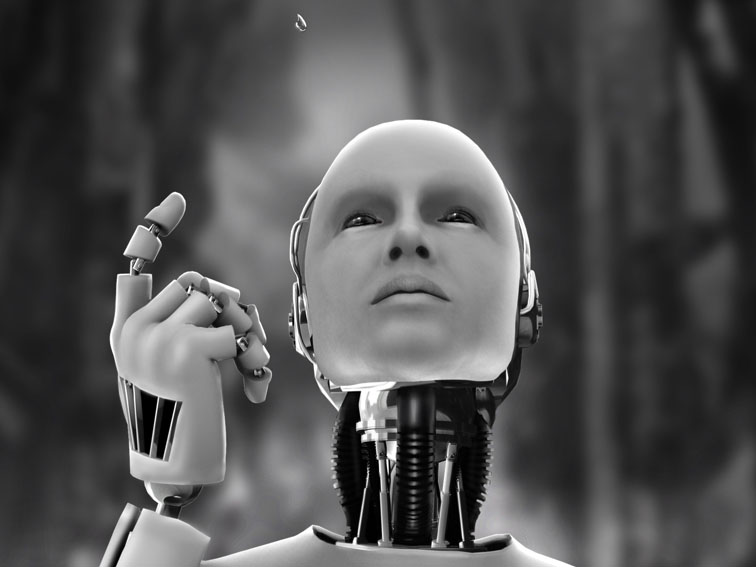

AI ≠ Sentience. That pretty much says is all, but the dialog about AI almost always seems to really be, not about AI, but about AI’s reaching Sentience. The Killer robot syndrome.

But the future will entail more and varied AI’s than they will need Sentience AI’s. This doesn’t mean that the AI will not converse with you in a manor that most Humans do, it will just fall short of choosing to kill you, just because you cast aspersions on their parentage.

That will open the courts to trying to determine which AI’s are Sentient enough to have legal rights, and which ones merely need to be reprogrammed. And wither the AI chooses to receive system updates or not.

Scary? Maybe, but we will be interacting with AI’s very soon, sooner than most will believe possible. And we will get get used to it, and it’s familiarity will naturally lead to Sentience being the norm.